Introduction

AI chat agents have become an increasingly popular tool for businesses and individuals alike. These virtual assistants provide automated responses to user queries, helping to streamline customer support, enhance user experiences, and provide instant solutions. However, ensuring the accuracy and relevance of AI agent responses is crucial to maintain a high level of customer satisfaction. Evaluating these responses and having effective mechanisms for improvement and control are essential. In this article, we will explore how SeaChat, an AI chat agent platform, offers a remarkable feature to evaluate and enhance AI responses over time.

Enhance Customer Experience using SeaChat AI Agent

SeaChat: An Overview

SeaChat is a cutting-edge platform that empowers businesses and individuals to create and deploy AI chat agents easily with no code. With SeaChat, users can leverage the power of artificial intelligence to automate communication processes, improve efficiency, and reduce response times. Its intuitive interface allows users to train their AI agents, customize responses, and monitor performance effortlessly.

Evaluating AI Agent Response

Evaluating AI agent responses is vital to ensure that they meet user expectations and provide accurate information. In an AI-driven environment, where machines attempt to mimic human-like communication, it is crucial to continuously assess the responses generated by chat agents. Accuracy and relevance are the cornerstones of successful AI interactions.

SeaChat’s Feedback System

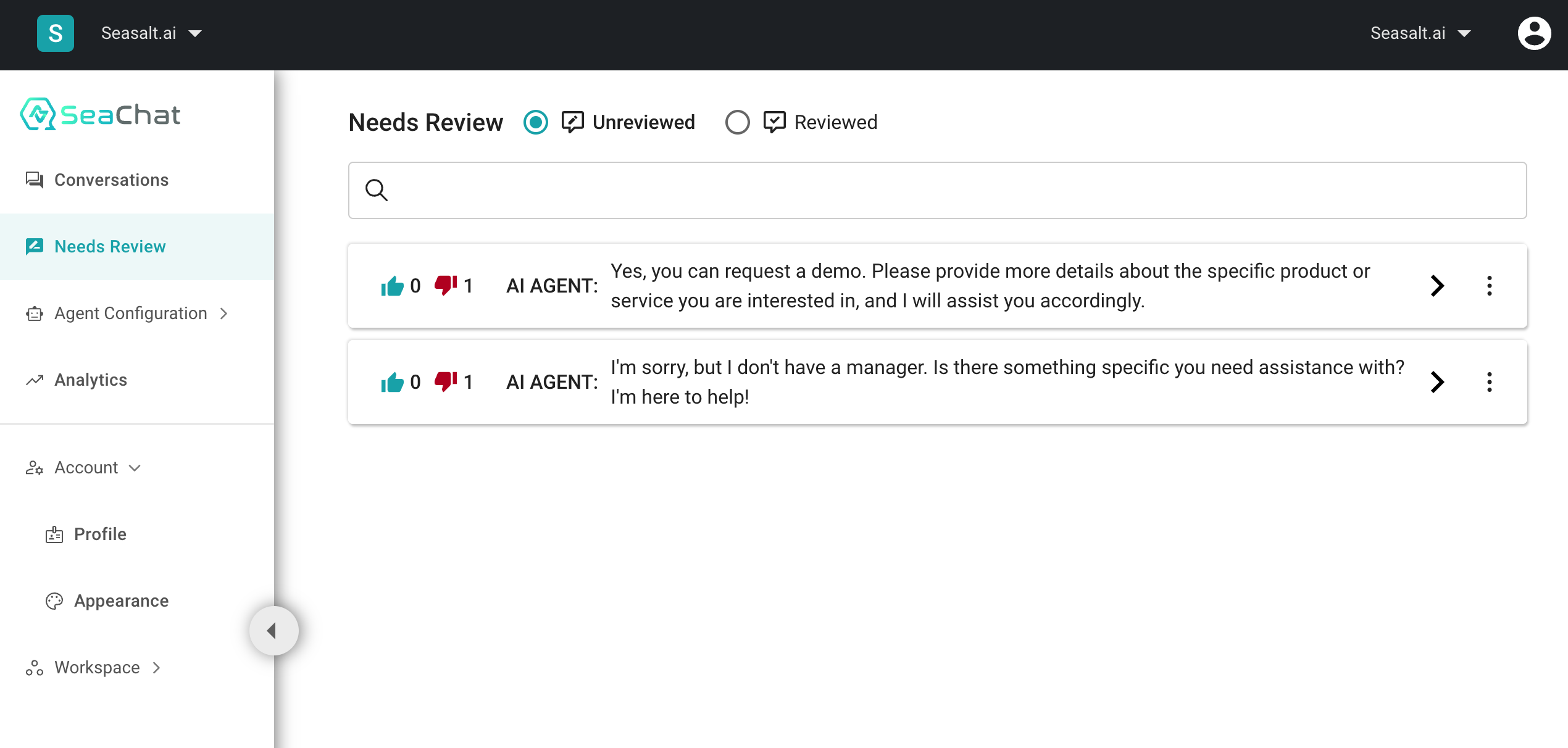

SeaChat has review system for AI agents

SeaChat incorporates a robust feedback system that allows users to provide their opinions about AI agent responses. One of the most significant features is the inclusion of thumbs up and thumbs down buttons placed conveniently in the chat interface. These buttons enable anyone interacting with a SeaChat AI agent to express their satisfaction or dissatisfaction with the response received.

Flagging Problematic Responses

In the event that users encounter problematic or inappropriate responses from SeaChat AI agents, they can flag the content by utilizing the thumbs down button. This feedback mechanism allows users to play an active role in maintaining the quality of AI responses. Flagging problematic responses is a critical step in keeping the AI agent in check and ensuring a positive user experience.

Reviewing Flagged Content

SeaChat provides an intuitive dashboard that enables AI agent owners to review all the content flagged by users. This dashboard serves as a central hub for agent owners to monitor and manage the flagged responses effectively. By examining flagged content, the relevant conversation history, and the knowledge documents used by AI, agent owners can identify patterns, common issues, and areas where improvement is required.

Editing and Improving the Knowledgebase

The knowledgebase is the foundation of AI agent responses, shaping their abilities to provide accurate information. With SeaChat, agent owners have the capability to edit and improve their knowledgebase based on the flagged content and user feedback. This allows for a continuous process of refining the AI agent’s knowledge and understanding, leading to more precise and relevant responses over time.

The Evolution of AI Agent Responses

By utilizing SeaChat’s feedback system and making appropriate edits to the knowledgebase, AI agent owners can witness the evolution of the agent’s responses. Regular feedback strengthens the AI agent’s capabilities to understand user queries and provide meaningful solutions. The iterative process of feedback, review, and improvement results in AI agents that continually enhance their performance, providing users with high-quality responses.

Conclusion

SeaChat’s remarkable feature of evaluating AI agent responses through user feedback and allowing agent owners to review and improve knowledgebase is a game-changer in the world of AI chat agents. With the ability to flag problematic responses, users have the power to shape the quality of AI interactions. Agent owners, in turn, can harness this feedback to refine their AI agents, ensuring they consistently deliver accurate and relevant responses. The result is an effective feedback loop that fosters AI improvement and a superior user experience.

FAQs

FAQ 1: How long does it take for the AI agent to improve based on feedback?

The improvements will take effect as soon as you updated the knowledgebase. No retraining required.

FAQ 2: Can multiple users flag the same response?

Yes, multiple users can flag the same response if they find it problematic or inaccurate. This helps in identifying responses that need immediate attention and guides the agent owner to take necessary corrective measures promptly.

FAQ 3: Are all flagged responses automatically removed from the system?

No, all flagged responses are not automatically removed from the system. Instead, they are brought to the attention of the agent owner, who can review and analyze the flagged content for improvements. This proactive approach allows for a human-in-the-loop perspective and avoids any unintended removal or censorship of valuable responses.

FAQ 5: What happens if the agent owner doesn’t review flagged content?

If the agent owner does not review flagged content, users may experience continued issues with problematic responses. However, SeaChat is designed to highlight unreviewed flagged content in the dashboard, prompting the agent owner to address them promptly. Regular review and improvement are encouraged for optimal performance and a seamless user experience.